Betfair Casino is one of the most popular online casinos in the world, and for good reason. It offers a great selection of games, superb design and functionality, excellent customer service, and many other advantages.

Betfair Casino has emerged as a highly acclaimed virtual casino, and its global popularity is largely attributed to its meritorious attributes. This establishment boasts a diverse variety of games, impeccable design and functionality, exceptional customer service, and a plethora of other favorable attributes.

The online casino, Betfair Casino, is widely acknowledged for its immense popularity across the global domain, owing to its demonstrable merits. The aforementioned platform offers an array of games, an aesthetically pleasing and functional design, exceptional customer service, and a multitude of other advantages that address the demands and inclinations of a heterogeneous player base.

Betfair Casino provides a diverse range of engaging games, encompassing both traditional and modern slots, table games, video poker, and additional captivating options. Gamers are facilitated to relish the thrill of live casino games, replete with instantaneous engagement with proficient croupiers, thus ensuring an utterly engrossing gaming encounter. The substantial assortment of choices available guarantees that a wide range of individuals, irrespective of their gaming preferences or aptitude, will find something suitable.

The design and functionality of the said platform are oriented towards endowing users with an uninterrupted and pleasurable interaction. The user-friendly interface facilitates seamless navigation for the players, while its adaptability to multiple platforms, comprising desktops and handheld devices, guarantees unrestricted accessibility to their preferred games, regardless of location and time.

The outstanding dedication of Betfair Casino towards providing exceptional customer service is demonstrated by its proactive and proficient support team, which is always accessible to offer assistance in addressing uncertainties or issues that may arise. In addition to offering various means of customer support such as live chat, email, and phone, the website further presents an all-encompassing Frequently Asked Questions (FAQ) segment and additional resources with the intention of facilitating players to locate relevant information.

Betfair Casino has solidified its position as a top-tier location for online gaming enthusiasts across the globe by consistently providing an assortment of games, an easily navigable interface, exceptional customer service, and a multitude of additional benefits.

Overview of Betfair Casino and its features

Since its establishment in 2000, Betfair Casino has been a leading online casino that is currently under the ownership and operation of Betfair Group plc, a publicly-traded entity on the London Stock Exchange. The casino maintains compliance with rigorous security and fairness criteria, ascertained by its licensure from both the UK Gambling Commission and the Malta Gaming Authority.

Betfair Casino offers a significant appeal to its customers with its sizable range of games, comprising a comprehensive selection of over one hundred games, encompassing virtual slot machines, traditional table games, engaging live dealer games, and video poker. The aforementioned games are procured from prominent software providers, including but not limited to Playtech, NetEnt, Microgaming, IGT, and Evolution Gaming.

Betfair Casino offers a notable benefit in terms of its compatibility with mobile devices. The casino is designed for optimal accessibility on both desktop and mobile platforms, with particular emphasis on the iOS and Android operating systems. This allows players to enjoy their preferred games on the go.

Betfair Casino caters to diverse gambling preferences by providing a selection of distinct sections, comprising a sports betting segment, a live casino segment, and a poker segment.

The casino offers a comprehensive selection of payment options, including credit and debit cards, e-wallets, bank transfers, prepaid cards, and digital currency, to facilitate convenient and seamless deposits and withdrawals for players.

The matter of security is of great significance to Betfair Casino, which has implemented avant-garde encryption technology to guarantee the protection of player data and transactions. Consequently, Betfair Casino has become a dependable and secure gaming platform. Various autonomous testing agencies, such as eCOGRA and TST, perform routine assessments on the casino’s gambling products to ensure that they comply with rigorous standards of impartiality and unpredictability.

Moreover, Betfair Casino presents a compelling affiliate initiative, allowing individuals who possess websites, blogs, social media influence, and substantial followings to generate commission through promotion of the said casino.

To summarize, Betfair Casino offers a diverse selection of games, mobile accessibility, sports wagering, live casino options, poker offerings, varied payment options, formidable security measures, and a lucrative affiliate program, positioning it as an outstanding option for enthusiasts of online casinos.

Betfair Casino’s game selection and software providers

Betfair Casino presents a vast array of over one hundred games, encompassing online slot simulations, table games, interactive dealer activities, and video poker, designed to accommodate diverse gaming inclinations. The casino’s game selection is supplied by reputable software providers, including Playtech, NetEnt, Microgaming, IGT, and Evolution Gaming. These suppliers are highly esteemed for their exceptional visual representation, movement specifications, auditory enhancements, along with their original and wide-ranging game proposals. The game collection of Betfair Casino incorporates an selection of widely recognized slot titles such as Age of the Gods, Starburst, Gonzo’s Quest, and Cleopatra. Additionally, the casino offers an outstanding diversity of table games comprising, but not confined to, blackjack, roulette, baccarat, video poker, and live dealer games.

The live dealer games offered at Betfair Casino, which are supplied by industry titan Evolution Gaming, furnish players with a highly realistic and engaging gaming experience, thanks to the provision of live dealers and real-time gameplay.

In addition, the casino offers an assortment of slot machines, table games, and video poker games in order to cater to a diverse array of players with varying gambling inclinations.

The Betfair Casino provides a wide-ranging gaming experience comprising of multiple sections, including sports betting, live casino, and poker; featuring highly sought-after games, such as Texas Hold’em and Omaha, among others.

In brief, Betfair Casino offers a heterogeneous gaming experience through a wide array of games that exhibit diverse themes, styles, and features, originating from leading software providers.

Bonuses and promotions offered by Betfair Casino

The incentives provided by Betfair Casino in the form of bonuses and promotions are worthy of attention. Betfair Casino offers a diverse range of incentives and promotional schemes that aim to address the needs of its patrons. Such schemes encompass enticements such as introductory benefits, deposit-related perks, gratis spins, cash-back opportunities, and other similar incentives.

The conventional greeting promotion presented by Betfair Casino is typically constituted of a matching deposit reward upon the initial deposit, accompanied by gratis spins or supplementary bonuses. As an illustration, a gaming participant may receive a match deposit bonus equivalent to 100% of their initial deposit, which is capped at a predetermined threshold, in conjunction with complimentary spins on a nominated slot game.

Furthermore, it is noteworthy that Betfair Casino offers regular deposit bonuses, thereby providing its players with the opportunity to receive additional financial rewards when making deposits. Alternative rewards, such as match deposit bonuses, free spins, or other incentives are attainable in the form of bonuses.

This presents a promising prospect for players to ameliorate their potential losses and extend their gameplay duration.

It is paramount to acknowledge that the conditions and provisions linked to every bonus and promotion may vary, hence it is incumbent upon players to carefully scrutinize and comprehend them prior to availing any offer. The scope of promotions and bonuses may, in certain instances, be constrained to players hailing from particular geographical locations or nations.

In this summary, it can be observed that Betfair Casino boasts a wide selection of bonuses and promotions tailored for its players, including but not limited to welcome bonuses, deposit bonuses, free spins, cashback offers, among others. The provision of these incentives has the potential to substantially augment the overall gaming experience, while also affording players the opportunity to acquire supplementary rewards.

Advantages & Disadvantages of Betfair Casino

The present review aims to examine the merits and demerits of Betfair Casino. The investigation focuses on elucidating the strengths and weaknesses associated with this online gambling platform. Betfair Casino offers a distinct advantage due to its user-friendly platform, featuring an efficiently organized layout and readily available gaming options. Moreover, the casino offers a multifarious selection of financial transaction alternatives, alongside a variety of bonuses and promotions devised to elevate the overall gaming experience of players. Betfair Casino exhibits exemplary customer support services by offering round-the-clock assistance through multiple channels including real-time chat, email, and telephone, thereby ensuring that players can procure prompt and suitable assistance as and when they need it.

Nevertheless, certain limitations exist, such as the inaccessibility of Betfair Casino to players residing in particular nations, such as the United States. Moreover, it is possible that specific players may perceive the stipulated wagering prerequisites associated with bonuses to be somewhat burdensome. Despite its drawbacks, Betfair Casino stands out as a premium choice for players who prioritize an extensive range of games, a streamlined platform, and first-rate customer service. Players residing in countries that are subject to limitations, or those who prefer a more flexible approach to wagering requirements, may need to explore alternative choices.

Betfair Casino exhibits a notable advantage in terms of its vigorous dedication to the preservation of security protocols and safeguarding of players’ welfare. The casino utilizes state-of-the-art encryption technology to ensure the safety of player information and transactions, while simultaneously adhering to the UK Gambling Commission’s licensing and regulatory requirements.

Furthermore, Betfair Casino offers multilingual support in German, Italian, Chinese, and Russian, augmenting its outreach to a diverse global clientele, beyond those languages that have been already cited. An alternative version of the website has been optimized for mobile devices, thereby providing players with the ability to engage in their preferred games while on the move.

On the contrary, the Betfair Casino exclusively permits the participation of players from specific nations, with the United States being among the disallowed territories. Certain individuals within the player pool may perceive the stipulated wagering prerequisites for incentives to be relatively steep.

Despite these minor limitations, Betfair Casino emerges as a prime option for individuals seeking a wide array of games, exceptional customer service, and a safe and secure gaming ambiance. The user-friendly interface, coupled with its multi-lingual accessibility, is designed to cater to a diverse global audience. However, prospective participants are advised to acquaint themselves with the limitations and timeframes associated with withdrawals prior to registration.

Design & Structure of the Online Casino

The design and structure of the online casino pertains to its overall architecture and layout, as well as its functionality and user experience. This encompasses the various visual and interactive elements that aim to enhance the user’s immersion and engagement within the virtual environment, such as the website’s design, graphics, audio, navigation, and game selection. Moreover, the structural framework of the online casino plays a critical role in ensuring its security, reliability, and compliance with regulatory standards, including measures for responsible gambling and data protection. As such, the design and structure of the online casino are crucial considerations in its development and maintenance, as they can significantly impact its success and sustainability in the highly competitive online gaming industry. Betfair Casino’s design and structure boasts a significant feature in the form of an integrated tutorial mode that is offered for selected games. This characteristic facilitates the acquisition of knowledge and comprehension among novice players concerning the regulations and mechanisms of a game, thereby minimizing the potential financial hazard.

The casino offers a selection of customization features that enable players to edit the color palette and background of the website, thus catering to their individual preferences.

Betfair Casino offers a comprehensive range of gaming options, which encompasses traditional casino games, alongside a sports betting section, live casino section, and poker section. These sections can be easily accessed from the main lobby. The significant advantage of this feature is the seamless and efficient switch between diverse gambling modalities available on the same platform, availing players the convenience of not relocating from the main website.

In summary, a meticulous planning and execution of the design and structural aspects of Betfair Casino resulted in the development of a user-friendly platform. The website demonstrates a high level of user-friendliness through its easily navigable interface, clear identification of games, and provision of efficient customer support to users. Betfair Casino presents itself as a highly advantageous option for players of varying skill levels owing to its offering of tutorial mode, customization options, and a diverse range of gaming categories.

How to Register at Betfair Casino?

The registration procedure for Betfair Casino can be commenced by adhering to a prescribed sequence of actions, thereby achieving membership status on the platform. Before initiating the registration process, it is imperative to ascertain the presence of Betfair Casino within the bounds of your jurisdiction and authenticate your compliance with the prescribed minimum age requirement for participating in the gameplay.

Upon clicking the ‘Join Now’ button, the individual will be directed to furnish personal data, including but not limited to their full name, residential domicile, and email address. To establish one’s identity, it is requisite to present a bona fide and authorized form of identification, such as a passport or driver’s license.

When deciding upon login credentials, it is of paramount importance to choose a combination that is not only easy to recall but also offers a high degree of security. Safeguarding the confidentiality and security of the aforementioned information is of paramount importance.

Consequently, it is essential to submit a deposit as a prerequisite for commencing gameplay. Betfair Casino presents a varied selection of payment methods, encompassing credit and debit cards, e-wallets, bank transfers, and prepaid cards. It is recommended to take advantage of any available welcome bonuses or promotional deals.

After satisfying the requirements of registration and making the initial deposit, Betfair Casino patrons are granted access to a diverse selection of casino games. The available options encompass a diverse range, comprising virtual slot machines, conventional table games, interactive sessions with live dealers, and captivating video poker games, thereby ensuring a comprehensive and fully immersive gaming encounter.

Betfair Online Casino

Betfair Online Casino is an internet-based casino platform that provides users with a range of gaming offerings. Betfair Casino presents a diverse selection of games, encompassing online slots, table games, live dealer formats, video poker, and a plethora of other options. The games are driven by preeminent software providers such as Playtech, NetEnt, and Microgaming, thereby guaranteeing that players are bestowed with the provision of superior graphics, captivating gameplay, and pioneering features.

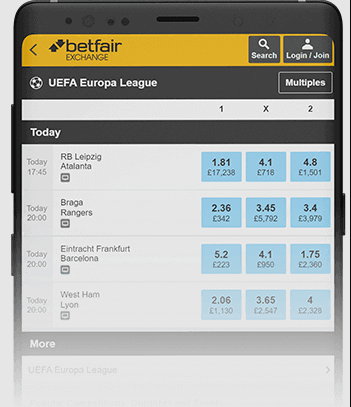

One notable feature of Betfair Casino is its exchange betting mechanism, allowing players the ability to engage in betting against one another. This results in a more participatory and competitive gaming atmosphere in contrast to the conventional model of gambling, wherein wagers are placed solely against the house. The distinctive characteristic of Betfair Casino distinguishes it from its rival entities within the industry.

Betfair Casino boasts an extensive array of casino games, and further offers specialized segments for sports betting, live casino, and poker. This comprehensive package provides players with the ability to easily transition between different forms of gambling without the need to navigate away from the primary website, thereby addressing a broad spectrum of personal predilections and pursuits.

Betfair Casino’s website caters to mobile devices by providing optimized platforms that allow players to access and enjoy their preferred games. This mobile offering is inclusive of both iOS and Android devices. The heightened degree of convenience and accessibility substantially augment the comprehensive gaming encounter.

In order to cater to a global clientele, Betfair Casino facilitates multilingual communication by offering support for various languages including English, Spanish, French, German, Italian, Chinese, and Russian. The establishment offers a diverse range of financial avenues, encompassing credit and debit cards, digital wallets, wire transfers, and prepaid cards, thereby rendering the process of fund transfer seamless and user-friendly for its global clientele. Betfair Casino provides a comprehensive selection of incentives and promotional activities aimed at augmenting the overall gambling undertaking. The assortment comprises of welcome bonuses, deposit bonuses, free spins, cashback schemes and additional bonuses.

Betfair Casino places a premium on prioritizing the safety and security of its players. To achieve this objective, the casino leverages cutting-edge encryption technology to safeguard player information and transactions against potential risks or threats. The gaming establishment provides its patrons with round-the-clock customer service via live chat, email, and telephone, thereby guaranteeing prompt assistance at any given time.

What Software Providers Power the Casino?

Which software providers facilitate the functioning of the casino. Betfair Casino is fortified by a multitude of preeminent software providers of the likes of Playtech, NetEnt, Microgaming, IGT, Evolution Gaming, and various others. The aforementioned industry leaders are widely recognized for their ability to produce highly innovative games that are tailored to suit the varying preferences of a broad range of players, while maintaining a reputation for excellence in terms of quality.

Playtech provides a comprehensive selection of entertainment options, including a diverse array of slot games, traditional table games, and live dealer games. The titles are renowned for their visually striking graphics and immersive gameplay, thereby guaranteeing a captivating gaming encounter for the players.

NetEnt, a distinguished supplier, has gained a reputation for delivering innovative and singular slot games. The organization has created a diverse range of widely popular gaming titles such as Starburst and Gonzo’s Quest that have persistently garnered favor amongst players globally.

Microgaming, a prominent participant in the gambling industry, has earned recognition for its progressive jackpot games, which include Mega Moolah and Major Millions. Furthermore, it is noteworthy that they possess a remarkable assemblage of video slots and table games which cater to diverse preferences among players.

Betfair Casino offers live dealer games from Evolution Gaming, a prominent provider of live casino software. These games provide a veritable casino experience to patrons in the sanctuary of their residence through actual croupiers and instantaneous gameplay.

Betfair Casino has the capacity to provide a comprehensive collection of games featuring diverse themes, styles, and features as a result of leveraging software from various providers. This affords an abundance of options to players, guaranteeing a rich and multifaceted gaming encounter.

In summation, Betfair Casino’s software providers are reputed for furnishing superior, inventive, and captivating games that make a significant contribution towards a pleasurable and absorbing gaming encounter for players worldwide.

What Casino Games Can I Play at Betfair Casino?

Betfair Casino offers a diverse array of gambling options to patrons. What types of casino games are available at Betfair Casino. In addition to the aforementioned popular games, Betfair Casino offers an extensive variety of slots, table games, video poker, and live dealer games, thereby catering to the diverse preferences of all players. Various instances serve as illustrations, including:

A diverse selection of slot games ranging from Book of Ra, Thunderstruck II, Gladiator, and various others are available. Table games such as Casino Hold ’em, Craps, Pai Gow Poker, and numerous additional options are available for patrons of the establishment to indulge in. Various types of video poker, such as Deuces Wild, Jacks or Better, Joker Poker, and other variations, are available to players. Live dealer games such as Live Roulette, Live Blackjack, Live Baccarat, and other similar options are available for participants seeking a more interactive gaming experience. Additionally, Betfair Casino provides its clientele with a diverse array of gaming options, including a dedicated sports betting segment, a live casino arena, and a poker division featuring esteemed titles such as Texas Hold ’em and Omaha. This heterogeneous assortment of alternatives caters to individuals with disparate gambling proclivities and predilections.

The constant addition of fresh gaming content offers players the opportunity to embark upon novel gaming experiences, thereby keeping their gaming endeavors stimulating and current. Betfair Casino offers a wide selection of games that encompass varied themes, styles, and features, thereby ensuring that players are provided with a highly diverse and engaging gaming experience.

Betfair Mobile Casino

The Betfair mobile casino provides a smooth and pleasurable gaming encounter, affording gamblers with the convenience of engaging in their preferred casino activities whilst on-the-move. The mobile casino is adeptly optimized for both iOS and Android platforms, rendering it highly user-friendly with seamless maneuverability across any mobile device. A diverse selection of casino games, encompassing online slot machines, table games, live dealer options, and video poker, are readily accessible via the mobile platform. The games have been optimized for optimal mobile play, resulting in graphics and gameplay of the same high-quality as the desktop version.

The mobile casino platform offers a multifaceted gambling experience to users, who can conveniently access the sports betting, live casino, and poker sections. This feature promotes comprehensiveness and diversity in mobile device gambling.

The Betfair mobile casino presents multiple financial methods in conjunction with an extensive selection of bonuses and promotions akin to its desktop variant. Furthermore, players have the option to access customer support services through a range of channels including live chat, email, and telephone, thereby guaranteeing prompt and uninterrupted assistance whenever required.

In essence, the Betfair mobile casino presents a noteworthy alternative for players desiring to partake in their preferred casino pastimes while mobile. The mobile casino offers a wide selection of games and a streamlined gaming encounter, facilitating convenient accessibility and gameplay at any given moment.

Betfair Casino’s mobile app and mobile compatibility

Betfair Casino offers a mobile application for users of iOS and Android mobile platforms, thereby enabling players to conveniently and efficiently access their preeminent casino games while on-the-go.

The mobile application offers an inclusive variety of gaming options, encompassing online slot machines, table games, live dealer games, and video poker, granting patrons the ability to partake in the same assortment of gaming choices that the casino’s desktop edition provides.

Furthermore, Betfair Casino extends its services beyond the mobile application by providing a website that is compatible with mobile devices. This allows players to conveniently access the casino using their mobile web browsers. The mobile web iteration has been optimized to cater to iOS and Android devices, proffering an analogous user experience akin to that of the mobile application.

The mobile iteration of the casino encompasses all of the functionalities and attributes present in the desktop version, including but not limited to the sports betting, live casino, and poker sections. In addition, the availability of customer support, banking choices, and incentive offerings is easily accessible.

The presented findings serve to substantiate the proposition that the Betfair Casino affords users an outstanding mobile gaming interface, as both the mobile app and its web-based counterpart offer seamless and robust user experiences. Ultimately, this underscores the salience of the casino’s effort to cater to the burgeoning mobile gaming market. The gambling establishment provides an extensive variety of games, accompanied by an intuitive and easily navigable interface, and all the functionalities accessible on the desktop iteration, facilitating leisurely engagement in preferred casino games from any location.

Security and fairness of the games

The online gaming platform, Betfair Casino, is renowned for its dependable and reliable services. It is noteworthy that the platform is diligently regulated by the Malta Gaming Authority and the UK Gambling Commission, demonstrating its compliance with rigorous standards. The aforementioned regulatory bodies possess the responsibility of guaranteeing that the casino maintains rigorous security protocols and impartiality benchmarks on a consistent basis.

Betfair Casino employs sophisticated 128-bit SSL encryption technology to safeguard the confidentiality and integrity of users’ sensitive personal and financial information. The previously mentioned attribute guarantees a heightened level of security for every transactional endeavor and all related sensitive information.

Esteemed independent auditing entities, such as eCOGRA and TST, conduct regular assessments of the Random Number Generator (RNG), ensuring the fairness and randomness of the games.

The responsibility for verifying and upholding the accuracy and fairness of a casino’s payout rates is entrusted to external entities. Moreover, it has been substantiated that the games undergo regular evaluations of equality, and the resulting findings are made readily accessible to the general populace in a candid manner.

The Betfair Casino upholds an unwavering pledge to providing a gaming encounter that is characterized by impartiality and security for all its players. The corporation places a considerable emphasis on maintaining the integrity and fairness of their games, and routinely implements measures to ensure their adherence to established industry standards.

In summation, Betfair Casino is a reputable and trustworthy online-based gaming establishment that offers a diverse range of games in a secure and fair gaming environment. Players are able to engage in their gaming activities with a sense of security and confidence, given the assurance that their personal and financial data is safeguarded and that the games offered by the casino are characterized by fairness and impartiality.

Betfair Casino Affiliates

Betfair Casino Affiliates is a virtual platform that facilitates the promotion of the Betfair Casino brand to a broader audience by individuals. The aforementioned platform offers a diverse array of resources that enable the creation and management of marketing campaigns, monitoring of financial gains and efficacy, and accessing of a multitude of promotional assets. Affiliates associated with Betfair Casino can avail themselves of a commission-based framework that remunerates them for generating traffic and inspiring fresh patrons to register and interact with the brand. The Betfair Casino Affiliates program offers the opportunity for individuals to foster enduring collaborations and cultivate a rewarding vocation as a casino affiliate marketer.

The Betfair Casino Affiliate program presents a potentially profitable opportunity for individuals who possess websites, blogs, and significant social media influence, accompanied by substantial followings, to generate supplementary income by advocating for a secure and trustworthy online casino. The presented program offers a commission arrangement that holds a certain degree of attractiveness, featuring variable rates that span from 25% to 40% based on the quantity of referred players as well as the corresponding generated revenue.

As a Betfair Casino Affiliate, an array of marketing resources, such as banners, text links, and landing pages, will be at your disposal, with the aim of efficiently endorsing the casino. Furthermore, it is possible to oversee the efficacy of your advertising endeavors and refine your tactics through access to extensive performance analyses and statistical information.

In order to augment promotional endeavors, Betfair Casino Affiliates offers an ample range of creative resources alongside a committed support staff who can render assistance with any questions or issues that may arise.

In brief, the Betfair Casino Affiliate program serves as an efficacious means of generating revenue through the promotion of Betfair Casino. The program has been specifically structured to facilitate successful promotional endeavors, owing to its highly competitive commission structure, diverse range of marketing tools, and dedicated team of support professionals.

Payment Methods

Betfair Casino provides an extensive selection of payment options, accommodating the varied preferences of players, both in relation to depositing and withdrawing funds. The techniques mentioned above encompass:

The contemporary realm of financial transactions has witnessed a marked prevalence of diverse payment instruments comprising credit and debit cards, which include Visa, MasterCard, Maestro, and a plethora of other options. The current inquiry illuminates electronic wallets, colloquially referred to as e-wallets, including PayPal, Skrill, Neteller, and other notable contenders in this area of the market. Direct bank transfers constitute a mechanism for depositing monetary resources into Betfair accounts. Prepaid card variants, notably the Paysafecard, have amassed noteworthy scrutiny in contemporary times within academic circles. The widespread adoption of Paysafecard can be attributed to its user-friendly interface and adaptability, which have garnered significant traction among consumers. The notion of cryptocurrency, in particular the prominent electronic currency denominated as Bitcoin, has garnered keen interest from the realm of finance and beyond. The decentralized medium of exchange functions autonomously, devoid of influence from banking or governmental entities, while employing intricate algorithms to safeguard transactions and uphold scarcity. The singular arrangement of the aforementioned construct has given rise to conflicting discussions and deliberations regarding its prospective monetary ramifications and environmental durability. Notwithstanding the regulatory and security issues encountered, Bitcoin remains a subject of considerable interest among its followers and serves as a captivating illustration of the ongoing advancement of currency. The Betfair Casino enforces a prescribed deposit limit of INR 500 and necessitates a minimum withdrawal amount of INR 1000, subject to the mode of payment chosen.

It is essential to recognize that the accessibility of particular modes of payment may not be uniformly present in all countries and such practices may demonstrate inconsistent provisions in relation to their deposit and withdrawal thresholds. It is recommended that players consult the relevant terms and conditions pertaining to each payment mode in order to fully comprehend any constraints or limitations that may be imposed.

In order to ensure the confidentiality and integrity of the personal and financial data belonging to players, Betfair Casino implements sophisticated encryption technology for all transactions. The earnest dedication to ensuring security within the framework of financial transactions brings tranquility to players in regards to both monetary deposits and withdrawals.

In brief, Betfair Casino provides a comprehensive array of payment alternatives that comprise credit and debit cards, electronic wallets, bank transfers, and various other feasible options. This payment diversity enhances the ease of managing transactions for the players. The casino places significant emphasis on expediting secure transactions in order to safeguard the personal and financial information of players at all times.

Withdrawal process

The withdrawal procedure at Betfair Casino has been devised to ensure utmost simplicity, ease, and security. To commence a withdrawal, it is imperative for players to adhere to the following guidelines:

- Access your Betfair Casino account by Logging in.

- One may access the ‘Cashier’ section of their account by navigating to the designated area.

- Choose the ‘Withdrawal’ alternative.

- Opt for the preferred mode of payment for facilitating the withdrawal process.

- Input the desired amount for withdrawal and adhere to any supplementary guidance given.

Betfair Casino is dedicated to the objective of effecting withdrawal requests in a timely manner of no more than 24 hours. The precise duration required for the transfer of funds into a player’s account is contingent upon the payment method selected. Withdrawals from E-wallets are often characterized by their swiftness and rapidity, usually taking no more than 24 hours. Withdrawals processed through credit/debit cards or bank transfers may take as long as five business days to reach completion.

Betfair Casino utilizes sophisticated encryption technology to safeguard the personal and financial data of players, thus guaranteeing their safety and security during all types of transactions. The assurance of security engenders a sense of tranquility among players throughout the course of the withdrawal procedure.

In brief, Betfair Casino presents a withdrawal process that is easily navigable and user-friendly, an extensive variety of payment alternatives, and a secure platform for conducting transactions. Players can be assured of the safeguarding of their personal and financial particulars while seamlessly undergoing the process of withdrawal, thereby instilling confidence within them.

User experience and overall impression of Betfair Casino

Betfair Casino offers a favorable and gratifying user experience for its clientele. The casino holds appeal for a wide spectrum of player interests and requirements, offering a user-friendly layout, a comprehensive range of games, mobile compatibility, exemplary customer service, assorted incentives and advertising campaigns, as well as a range of payment alternatives. The salient aspects, which bolster the favorable user experience on Betfair Casino, encompass:

- The interface design of the website is user-friendly, characterized by efficient organization and user-friendly navigation features that enable players to locate their preferred games and promptly access crucial functionalities.

- The online gaming platform known as Betfair Casino boasts an impressive array of gaming options that encompass slot machines, table games, live dealer games, and video poker, all of which are skillfully crafted by the most prominent software development companies.

- The mobile compatibility aspect of the casino is characterized by a well-crafted and optimized version which caters to iOS and Android devices. This feature provides players with the ability to savor their preferred games while on the move.

- Betfair Casino offers a range of gambling options tailored to various player preferences, which include sports betting, live casino, and poker sections.

- The provision of exceptional customer support is evidenced by the availability of the support team round-the-clock through different channels including live chat, email, and telephone, affirming adequate aid is provided to players in times of need.

- The casino presents a variety of bonuses and promotions, which encompass welcome bonuses, deposit bonuses, free spins, cashback offers, and additional rewards. It is imperative for players to diligently peruse and comprehend the stipulated terms and conditions prior to availing themselves of any promotional offers.

- Betfair Casino offers a diverse range of payment options to facilitate both deposit and withdrawal processes, thus ensuring a seamless and secure transactional experience for its players.

- The Betfair Casino presents an extensive and gratifying gaming ambience, rendering it an enticing option for novices and proficient participants of online casino pursuits.

Pros and cons of Betfair Casino

Pros of Betfair Casino:

One notable advantage of the Betfair Casino is its range of benefits and features. The platform offers a diverse repertoire of games, including but not limited to classic table games, slot machines, and live dealer games. Additionally, it boasts a user-friendly interface and intuitive navigation, enabling seamless and hassle-free user experience. Moreover, the casino offers a generous welcome bonus, as well as regular promotions and bonuses, adding value to users’ gameplay. Overall, the Betfair Casino stands out for its extensive range of offerings and user-centric approach, making it a viable option for online gaming enthusiasts. This corpus comprises a wide-ranging compendium of more than 100 casino games, which encompass diverse categories comprising online slot machines, table games, live dealer games, and video poker. The provision of premium gaming experience is assured by elite software providers, who facilitate state-of-the-art graphics, animations, and audio effects. The website has been designed in an intuitive manner and with an emphasis on effortless navigation, in order to optimize user convenience. Mobile compatibility is fully integrated, thereby facilitating player access to their preferred casino games while on the go. The expanded gaming offerings encompassing sports betting, live casino, and poker subdivisions are of notable importance. The provision of unparalleled, round-the-clock customer support is made available to customers through various platforms including online live chat, email correspondence, and telephone communication. Players are presented with an extensive range of alluring incentives and promotional offers. Numerous payment modalities are available to facilitate effortless depositing and withdrawing transactions, encompassing credit and debit cards, electronic wallets, bank transfers, prepaid cards, and virtual currency. The guarantee of stringent security and equitable measures is upheld through the licensing and regulation provided by both the Malta Gaming Authority and the UK Gambling Commission.

The drawbacks associated with utilizing Betfair Casino are as follows: Betfair Casino is only accessible to a select group of countries due to specific legal restrictions, thereby limiting its ability to reach a wider range of global players. The conditions of bonuses and promotions frequently comprise of wagering requisites, which pose a challenge for certain players to fulfill prior to their ability to cash out their winnings. Betfair Casino is mobile-compatible, and its services can be accessed via mobile web browsers. However, it is noteworthy that there is absence of a dedicated mobile application for both iOS and Android devices.

Customer support and security measures

The provision of assistance to clients and the implementation of protective measures to safeguard their interests can be categorized as customer support and security measures, respectively.

At Betfair Casino, the design of the customer support experience is aimed at offering players a dependable and useful avenue for addressing any challenges that may arise. The customer assistance team is readily accessible around the clock through multiple communication channels, comprising live chat, email, and telephone, thereby ensuring that players can conveniently obtain prompt support.

The Betfair Casino website offers a comprehensive FAQ section that encompasses a diverse range of subjects, thus proving to be an indispensable source of information for players. The Frequently Asked Questions (FAQ) section encompasses a range of topics pertaining to account administration, cash transfer procedures, regulations of gameplay, ethical considerations for gambling, and technical difficulties. This feature facilitates players in addressing frequently encountered issues without the requirement of direct communication with the assistance team.

The maintenance of security is of utmost importance at Betfair Casino, which is evinced by their conscientious approach towards safeguarding the confidential personal and financial details of their esteemed players. Through the utilization of modern encryption technology, the casino guarantees the protection of all confidential data and transactions, thereby affording players a secure and risk-free gaming atmosphere.

In order to ensure equity in gaming, Betfair Casino undergoes routine evaluations and assessments by third-party entities such as eCOGRA and TST. These regulatory bodies are responsible for overseeing the equitable and arbitrary nature of the games offered by the casino and verifying the casino’s strict adherence to security and fairness regulations.

Betfair Casino’s unwavering commitment to the maintenance of the highest industry standards is exemplified by the attainment of full licensing and regulation accreditation from both the Malta Gaming Authority and the UK Gambling Commission. The licensing of the casino serves to guarantee conformity with pertinent regulations, and the provision of an equitable and secure gaming milieu for players.

To encapsulate, the operational emphasis of Betfair Casino on superlative customer service, all-encompassing repository of frequently asked questions, sturdy security protocols, and strict compliance with regulatory mandates guarantees a secure and gratifying gaming experience for its clientele. The casino’s commitment to ensuring a dependable support system and upholding a safe and secure atmosphere renders it a desirable alternative among players pursuing an online casino that engenders trust.

FAQ

Yes, all of the games at Betfair Casino can be played for free in demo mode. This is a great way to try out the games before you start playing for real money.

The minimum deposit amount is 500 INR.

Withdrawals are usually processed within 24 hours.

Yes, Betfair Casino is a safe and secure place to play. The casino uses the latest encryption technology to protect your personal and financial information.

Yes, all of the games at Betfair Casino are fair and random. The casino is regularly tested by independent testing agencies, such as eCOGRA and TST. You can be sure that you’re getting a fair game when you play at Betfair Casino.

Yes, Betfair Casino is fully licensed and regulated by the Malta Gaming Authority and the UK Gambling Commission. The casino uses the latest encryption technology to protect player information and transactions, ensuring that it is a safe and secure place to play.

Betfair Casino offers a wide range of games, including online slots, table games, live dealer games, video poker, and more. The casino features games from leading software providers such as Playtech, NetEnt, and Microgaming.

Yes, Betfair Casino can be accessed on both desktop and mobile devices. The mobile casino is optimized for both iOS and Android devices, making it easy to navigate and use on any device.

Yes, all of the games at Betfair Casino are fair and random. The casino uses a Random Number Generator (RNG) to ensure that the outcome of all games is completely random and unbiased. The RNG is regularly tested and audited by independent testing agencies such as eCOGRA and TST, which certify that the games are fair and random.

Betfair Casino offers a wide range of payment methods, including credit and debit cards, e-wallets, bank transfer, prepaid cards and Cryptocurrency. The deposit and withdrawal limits can vary depending on the payment method used.

You can contact a member of the customer support team 24/7 via live chat, email, or phone if you need any assistance. The customer support team is always happy to help with any query, no matter how big or small.

Yes, the Betfair Casino affiliate program is a great way to earn commission by promoting Betfair Casino. As an affiliate, you will receive a commission for every new player that you refer to the casino. You can sign up for an account on the Betfair Affiliates website and start promoting Betfair Casino right away.

Conclusions

Betfair Casino has gained a commendable reputation as a prominent online casino, recognized for its comprehensive and diverse assortment of games, exceptional client assistance, and robust measures to ensure security. The casino is subject to licensing and regulation by the Malta Gaming Authority, thereby complying with rigorous protocols aimed at guaranteeing a secure and satisfying gaming encounter for patrons.

One of the advantageous elements of Betfair Casino is the extensive selection of games offered by leading software providers such as NetEnt and Playtech. In addition to this, numerous promotional opportunities, bonuses, and a lucrative loyalty scheme are also available. The user experience at the casino is augmented by the user-friendly interface and simplified navigation system.

Nevertheless, Betfair Casino presents certain disadvantages. The accessibility of the casino is restricted in certain countries, such as the United States, thereby imposing limitations on certain players. Furthermore, the process of withdrawing funds may exhibit a reduced speed in relation to certain competitors, thereby potentially elevating a concern for players who assign greater importance to expeditious payouts.

The customer support staff at Betfair Casino exhibit a high degree of responsiveness and helpfulness, readily offering assistance through an array of communication channels, including live chat, email, and telephone, which are available round-the-clock. The casino presents an extensive array of payment mechanisms for both deposits and withdrawals, thus accommodating the varying payment preferences of disparate players.

The promotion of security and fairness is of utmost importance to the operations of Betfair Casino. The casino employs state-of-the-art SSL encryption technology in order to safeguard the confidential personal and financial data of players. Additionally, the casino’s gaming processes undergo frequent evaluations by autonomous auditors to ensure the veracity and impartiality of outcomes.

Betfair provides a specialized affiliate program, catered towards individuals who are inclined towards promoting the casino. This initiative enables players to accumulate commissions by referring acquaintances and admirers.

The foregoing succinctly demonstrates the outstanding reputation and well-established status of Betfair Casino, which is an acclaimed and esteemed online casino renowned for its extensive array of high-quality games, as well as its attractive promotions and bonuses. The effectiveness of the user experience, customer assistance, and security protocols of the system under consideration are noteworthy. It is imperative to note that prospective gamers ought to be cognizant of the restricted accessibility of the casino in certain jurisdictions alongside the possibility of a comparably tardy withdrawal procedure.